Co-Founder Taliferro

Data and SMOTE: Enhancing Model Accuracy for Businesses

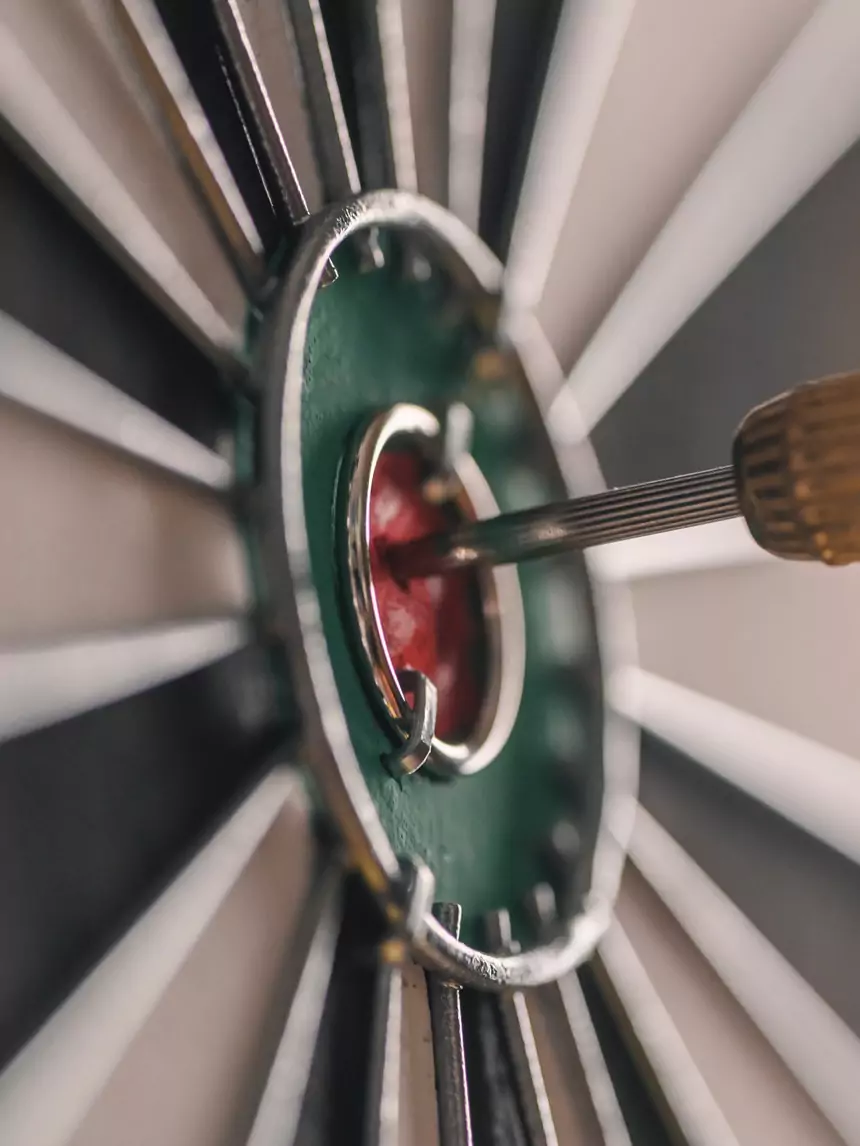

The ability to make accurate predictions using machine learning models is a coveted asset. However, a common hurdle often undermines the efficacy of these models: data imbalance. It's a scenario where the number of observations in one class significantly outweighs those in others, potentially skewing the model's performance. Enter SMOTE (Synthetic Minority Over-sampling Technique), a revolutionary approach designed to address this very challenge.

Understanding Data Imbalance

Data imbalance is pervasive across various business applications, from detecting fraudulent transactions to predicting customer churn. When the dataset is skewed, predictive models tend to favor the majority class, overlooking the minority class which often holds more significant insights. The consequence? A model that's ostensibly accurate but practically inadequate, leading to poor decision-making that could cost businesses dearly.

The Basics of SMOTE

Developed by Nitesh Chawla et al., SMOTE stands as a beacon for tackling data imbalance. This technique synthesizes new instances of the minority class by interpolating between existing ones. Unlike traditional oversampling methods that simply duplicate minority class samples, SMOTE enhances the dataset's diversity without risking model overfitting to repeated instances. This innovative approach ensures models learn more effectively from balanced data, reflecting a truer representation of the real-world scenario.

Why Businesses Should Care About Balancing Data

- Improved Model Accuracy: A personal anecdote underscores this point vividly. In a project aimed at predicting loan defaults, applying SMOTE transformed the predictive accuracy of our model. Pre-SMOTE, the model's accuracy hovered around misleadingly high percentages, primarily predicting non-defaults. Post-SMOTE, the model achieved a more nuanced understanding of defaults, enhancing its real-world applicability.

- Better Business Decisions: Accurate models foster informed strategic decisions. For instance, in targeting marketing campaigns, balanced data can help identify niche customer segments that are likely to respond, optimizing resource allocation and maximizing ROI.

- Fairness and Bias Reduction: Balancing data is also a step toward ethical AI. By giving due representation to all classes, businesses can mitigate biases inherent in automated decision-making, promoting fairness and inclusivity.

Implementing SMOTE in Business Applications

The application of SMOTE is not limited to any single domain. From improving the accuracy of fraud detection systems in financial services to refining customer segmentation in marketing, SMOTE has broad utility. Integrating SMOTE into the data preprocessing pipeline involves identifying key features, applying SMOTE to create synthetic samples, and then training the model on this enriched dataset. The transformation it brings is not just in model performance but in the depth of insights businesses can derive.

Challenges and Considerations

While SMOTE is powerful, it's not without challenges. Overfitting remains a risk, especially when synthetic samples overly represent noise. Moreover, SMOTE's efficacy can vary across different datasets and problem domains. Best practices include careful hyperparameter tuning, employing under-sampling techniques alongside SMOTE, and rigorous cross-validation to ensure models remain generalizable and robust.

Future of Data Balancing Techniques

The landscape of data balancing techniques is dynamic, with ongoing research introducing new methods that promise even greater efficacy and versatility. From adaptive synthetic sampling to integration with deep learning architectures, the future holds the promise of more sophisticated tools for businesses to leverage in their pursuit of model accuracy and innovation.

Conclusion

The journey towards achieving balanced data, and consequently, more accurate and fair predictive models, is both a technical challenge and a strategic imperative for businesses. SMOTE stands out as a crucial tool in this endeavor, enabling businesses to unlock new levels of efficiency and insight from their data analytics practices.

For businesses looking to refine their predictive models, embracing an API-first approach and integrating techniques like SMOTE into their analytics workflow is a step in the right direction. Consulting with data science professionals can provide the expertise needed to navigate the complexities of data balancing and harness the full potential of machine learning models.

FAQ Section

What is data imbalance, and why is it a problem?

Data imbalance occurs when the distribution of classes in a dataset is uneven, leading to models that are biased toward the majority class. This can severely impact the model's ability to make accurate predictions for the minority class, which is often of greater interest.

How does SMOTE improve model performance?

By generating synthetic samples of the minority class, SMOTE balances the dataset, allowing models to learn more effectively from a diversified set of observations. This balance enhances model accuracy and generalizability.

Can SMOTE be used for all types of data?

While SMOTE is primarily designed for classification tasks with numerical data, variations of the technique can accommodate categorical data and are being developed for a wider range of applications.

What are some common pitfalls in using SMOTE, and how can they be avoided?

One common pitfall is creating synthetic samples that over-represent noise, leading to overfitting. This can be mitigated by combining SMOTE with under-sampling of the majority class, and by applying rigorous cross-validation techniques to evaluate model performance.

Are there alternatives to SMOTE for balancing datasets?

Yes, there are several alternatives to SMOTE, including ADASYN (Adaptive Synthetic Sampling) and borderline-SMOTE, which focus on generating samples near the decision boundary. Each method has its unique advantages and applicability depending on the specific characteristics of the dataset.

Tyrone Showers